Our fear of killer robots might doom us all

At the very least, this technophobia seems destined to hamstring our economy

America seems gripped with what we might call Battlestar Galactica Syndrome.

In the TV show, society developed a technology (supersmart robots) which then produced unintended and terrible results (the near destruction of humanity). Where the syndrome comes in is that in not wanting to make the same mistake twice, a society might decide to completely ban the technology. We can't have killer robots imperil our species if we don't have smart robots to begin with!

The problem is you probably won't have autonomous cars and 1,000 other cool inventions, too. Fear of new technology can be stultifying.

Subscribe to The Week

Escape your echo chamber. Get the facts behind the news, plus analysis from multiple perspectives.

Sign up for The Week's Free Newsletters

From our morning news briefing to a weekly Good News Newsletter, get the best of The Week delivered directly to your inbox.

From our morning news briefing to a weekly Good News Newsletter, get the best of The Week delivered directly to your inbox.

You can see this problem in America today, with 100 artificial intelligence and robotics researchers, including Tesla's Elon Musk and Alphabet's Mustafa Suleyman, calling on the United Nations to ban or somehow limit autonomous weapons. The letter they just signed expresses concern that these AI technologies will make warfare more lethal and uncontrollable, especially for civilians.

But those aren't their only reasons for wanting a global agreement. One signee, deep-learning pioneer Yoshua Bengio, worries that weaponizing AI could actually "hurt the further development of AI's good applications," according to Fortune.

Here's the problem: Preemptively banning the military application of AI technologies also carries risks and trade-offs that researchers must consider. Indeed, such limits might actually impede development — the exact outcome researchers say they want to avoid. Many of the key technologies of the last half century were spurred along by military R&D and purchases, including computers, airplanes, nuclear power, and the internet. Throughout American history, "U.S. military support for the development of new technologies" has helped drive the economy, explains Michael Lind is in his 2012 book Land of Promise: An Economic History of the United States.

Now, theoretically you could replace a lot of military financing and focus with more civilian spending. But in the U.S., at least, it is a lot easier to get Congress to cut checks for science research when the money is funneled through the Pentagon in the name of advancing national security.

Of course, major militaries, including the United States and China, already have big plans for AI technologies and are unlikely to turn back. This isn't about a particular kind of weapon such as landmines or cluster bombs, but rather a revolution in how warfare is conducted. And what president or prime minister wants to explain to the grieving parents of a fallen special forces soldier why the mission against a terrorist base wasn't handled by an autonomous drone?

Moreover, there is a plausible argument that these technologies will make war more precise and less harmful for civilians. Rules of engagement might be more closely followed by machines. The fog of war could be less opaque, for instance, if battle spaces were constantly scouted by swarms of tiny autonomous quadrocopters.

One also has to wonder whether these scientists are inadvertently contributing to an emerging technopanic. Already you have technologists talking about robot taxes to slow tech progress and prevent mass unemployment. And this is happening when unemployment is low. What happens the next time there's a recession followed by slow recovery? Will populist politicians call for banning customer kiosks in fast-casual restaurants? We need to hear more from scientists about the benefits of emerging technologies and how those benefits can be broadly enjoyed.

The big problem with technological progress is that we don't have enough of it, or enough of it spread widely throughout the economy. If the American economy is going to grow at least as fast in the future as in the past, we need more invention and innovation — even if some of it ends up on the battlefield.

Create an account with the same email registered to your subscription to unlock access.

Sign up for Today's Best Articles in your inbox

A free daily email with the biggest news stories of the day – and the best features from TheWeek.com

James Pethokoukis is the DeWitt Wallace Fellow at the American Enterprise Institute where he runs the AEIdeas blog. He has also written for The New York Times, National Review, Commentary, The Weekly Standard, and other places.

-

'Horror stories of women having to carry nonviable fetuses'

'Horror stories of women having to carry nonviable fetuses'Instant Opinion Opinion, comment and editorials of the day

By Harold Maass, The Week US Published

-

Haiti interim council, prime minister sworn in

Haiti interim council, prime minister sworn inSpeed Read Prime Minister Ariel Henry resigns amid surging gang violence

By Peter Weber, The Week US Published

-

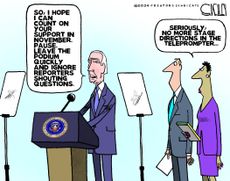

Today's political cartoons - April 26, 2024

Today's political cartoons - April 26, 2024Cartoons Friday's cartoons - teleprompter troubles, presidential immunity, and more

By The Week US Published